gRPC: The Scalable Bridge

I’ve been sketching out a new system lately distributed agents scattered across the wire, with a Go orchestrator acting as the brain and Python workers serving as the hands. When you’re trying to move at scale, you can’t afford a tremor in the heartbeat. You need a way for them to speak that doesn’t waste a single breath.

For years, we built our systems like sprawling, noisy bazaars. We’d throw heavy, uncompressed JSON payloads over the fence using HTTP/1.1, just hoping the other side wouldn't choke on the sheer volume of text. It was honest work maybe, but it was slow bloated and fragile, like building a bridge on a foundation of loose sand. We spent more time unfolding the packaging than actually using the goods inside.

That’s why I landed on gRPC.

Google spent over a decade perfecting this craft under the name “Stubby” just to keep their world from collapsing under the weight of a billion whispers a second. They opened the gates in 2015 and handed the blueprints to the community. Today, it isn’t just another library, it’s the quiet, steel and stone architecture that keeps a modern microservice from shaking itself apart when the wind picks up.

I. The Lineage: From RPC to gRPC

For a long time, we chased the RPC Ancestry. The dream was simple: make calling a function on a remote server look identical to a local function call in your code.

Then came the REST Rebellion. In the 2010s, we all agreed on a simpler way, we used HTTP/1.1 and JSON because they were human-readable. It was the language of the people decoupled and easy to grasp. But as our systems grew with thousands of nodes, we started paying the REST Tax. We were spending all our energy just opening envelopes, reading headers, and parsing strings. At scale that isn’t just overhead, it’s a ceiling you eventually hit.

Now, we’re in the gRPC Era. It’s a return to the contract-first discipline of the old days, but supercharged with the modern speed of HTTP/2 and the binary efficiency of Protocol Buffers.

Use Cases: Where to Deploy vs. Where to Avoid

Deploy: Use it for internal microservice meshes and high-throughput AI workloads, where every millisecond of latency feels like money bleeding out. It is also the gold standard for real-time IoT telemetry and streaming data.

Avoid: Steer clear for public facing API gateways where you don’t control the client, or for simple CRUD apps where developer velocity outweighs raw performance. Furthermore, most browsers require a proxy (like gRPC-Web) to handle gRPC, as they do not natively support the specific HTTP/2 features it requires.

II. The CPU Toll: Why Binary Beats Text

We often blame the network when things get slow, but the real bottleneck is Deserialization (the act of turning a message back into something a program can use) is the silent killer of backend performance.

The JSON Burden

Think of JSON like a handwritten letter. When a server receives {"id": 150}, the CPU must scan the payload character by character. It must find the opening bracket, locate the quotes, extract the string "id", allocate memory, and then mathematically convert the ASCII characters 1, 5, 0 into a machine-level integer.

At the scale of thousands of requests per second, you’re burning immense CPU cycles just on reading.

The Protobuf Advantage

gRPC uses Protocol Buffers (Protobuf). It does not parse text, it maps data. When the server receives a binary sequence like 0x08 0x96 0x01, the code already knows exactly where those bits belong. The CPU performs a rapid bitwise shift and drops the value directly into memory. There is no searching for brackets or converting strings. It’s a mechanical, frictionless transfer of information.

III. The Transport Engine: HTTP/2 and HPACK

The spatial reduction of the payload is only half the battle. The true performance of gRPC rests on the capabilities of HTTP/2.

1. Annihilating Head-of-Line Blocking (Multiplexing)

In HTTP/1.1, if a client needs to make ten parallel requests, it must open ten separate TCP connections (latency of ten TLS handshakes which is heavy and slow) or send them sequentially. This is Head-of-Line blocking, it’s like being stuck behind a slow truck on a single-lane road.

HTTP/2 introduces multiplexing. gRPC maps every RPC to a unique stream, allowing thousands of asynchronous requests and responses to be interleaved over a single, persistent TCP connection. It turns that single-lane road into a massive, multi-lane highway over one single, persistent connection.

2. HPACK Header Compression

REST APIs transmit heavy text headers (Authorization: Bearer..., User-Agent) with every single request. gRPC uses HPACK. Both the client and server maintain a stateful index of previously seen headers. If an auth token hasn’t changed, gRPC transmits a tiny integer index instead of the full string, slashing header overhead by 85% to 90%.

3. Flow Control and Backpressure

To prevent a fast producer from crashing a slow consumer, gRPC utilizes HTTP/2 WINDOW_UPDATE frames. By default, a stream opens with a 65 KB flow-control window. As data is sent, bytes are deducted. If the window hits zero, transmission stops. This creates natural, network-level backpressure that prevents memory exhaustion.

IV. The Blueprint: Defining the Law in .proto

A .proto file is the absolute law. It defines the message types and the service interface.

1. The Message: Data with Discipline

Messages are the fundamental unit of data exchange. Unlike JSON, where keys like "user_id" are repeated in every single object, Protobuf treats your data as a stream of Tag-Value pairs.

Every field in a message has a Field Number. This number is used to identify the field in the binary format. The wire format for a field starts with a key which is calculated as:

Field Number: The unique ID you assign (e.g.

1,2,3..).Wire Type: A 3-bit value representing the category of data (0 for Varints, 1 for 64-bit, 2 for Length-delimited, etc.).

The 15-Byte Threshold (optimization Tip): Because the key is encoded as a Varint, field numbers 1 through 15 take only one byte to encode (including the wire type). Field numbers 16 through 2047 take two bytes. Always reserve 1–15 for your hot path fields (IDs, timestamps, or status codes) the ones that appear in every request.

Advanced Message Constructs

syntax = "proto3";

package tech.blog.v1;

import "google/protobuf/timestamp.proto"; // using Well-Known Types (WKTs)

message UserProfile {

// 1-15: hot path fields (optimized)

uint64 id = 1;

string username = 2;

google.protobuf.Timestamp last_login = 3;

// use oneof for mutually exclusive fields (saves memory)

oneof contact_method {

string email = 4;

string phone_number = 5;

}

// Maps are efficient but unordered

map<string, string> metadata = 6;

// nested messages for logical grouping

message Location {

double latitude = 1;

double longitude = 2;

}

Location home_address = 7;

// reserved tags: preventing future catastrophes

// if you delete old_field, reserve its tag so no one reuses it later on

reserved 8, 9, 10 to 12;

reserved "obsolete_field_name";

}

2. The Enum: Eradicating Magic Strings

Enums in Protobuf are not just lists, they are rigid taxonomies.

The Zero-Value Law: In proto3, the first element of every enum must be assigned to 0. This is the default value. If a field is not set, it defaults to this 0-index element.

- Always name your

0valueUNKNOWNorUNSPECIFIED. This allows you to distinguish between “the user chose the first option” and “the client didn’t send this field at all.”

enum AccountStatus {

ACCOUNT_STATUS_UNSPECIFIED = 0; // Safe Default

ACCOUNT_STATUS_PENDING = 1;

ACCOUNT_STATUS_ACTIVE = 2;

ACCOUNT_STATUS_SUSPENDED = 3;

ACCOUNT_STATUS_DELETED = 4 [deprecated = true]; // graceful retirement

}

3. The Service: Defining the Contract

The service block is where you define the entry points to your application. This is where we specify the communication pattern (Unary vs. Streaming).

service AnalyticsService {

// Unary: classic Request-Response

rpc GetUserStats(UserRequest) returns (UserStatsResponse);

// Server Streaming: great for live feeds

rpc StreamGlobalEvents(Empty) returns (stream Event);

// Client Streaming: ideal for bulk uploads

rpc UploadLogs(stream LogEntry) returns (UploadSummary);

// Bi-directional Streaming: high-performance Chat model

// both sides can send/receive whenever they want over one connection

rpc RealTimeCollaboration(stream Delta) returns (stream StateSync);

}

4. Low-Level Bits: Field Rules and Encoding

Why does gRPC feel so much faster? Because it avoids the String Scanning overhead of JSON.

Optionality: In

proto3, all fields are optional by default. If you don’t send it, it doesn’t take up any space on the wire.Packed Repeated Fields: When you define a

repeated int32 ids = 1;,proto3uses Packed encoding. Instead of repeating the Tag for every element, it sends the Tag once, followed by the total length, and then a stream of raw binary values.

V. The Math of Serialization: Engineering for Bits

gRPC uses specific mathematical tricks to ensure your payload is as small as theoretically possible.

1. Base-128 Varints

Instead of dedicating a fixed 4 bytes to an integer (which is wasteful for small numbers), Protobuf uses Varints. The Most Significant Bit (MSB) of each byte acts as a continuation flag.

The number

150would normally take 4 bytes in a standardint32.In Protobuf, it is mathematically split into 7-bit blocks and transmitted in just two bytes:

0x96 0x01.

2. ZigZag Encoding for Negative Numbers

Standard integers represent negative numbers using two’s complement. A simple -1 would trigger the continuation bit across all 10 bytes in a varint, causing a bloated payload.

gRPC fixes this with the sint32 type using ZigZag encoding. It maps signed integers to the unsigned domain.

The value -1 is mapped to 1, allowing it to compress into a single byte.

3. Field Numbers: The 15-Byte Threshold

In Protobuf, the field tag and wire type are packed into a single byte. However, since 3 bits are reserved for the wire type and 1 bit for the MSB, you only have 4 bits left for the field number.

Fields 1 to 15: Encoded in 1 byte.

Fields 16 to 2047: Encoded in 2 bytes.

Maxim: Always assign 1-15 to your highest-frequency data.

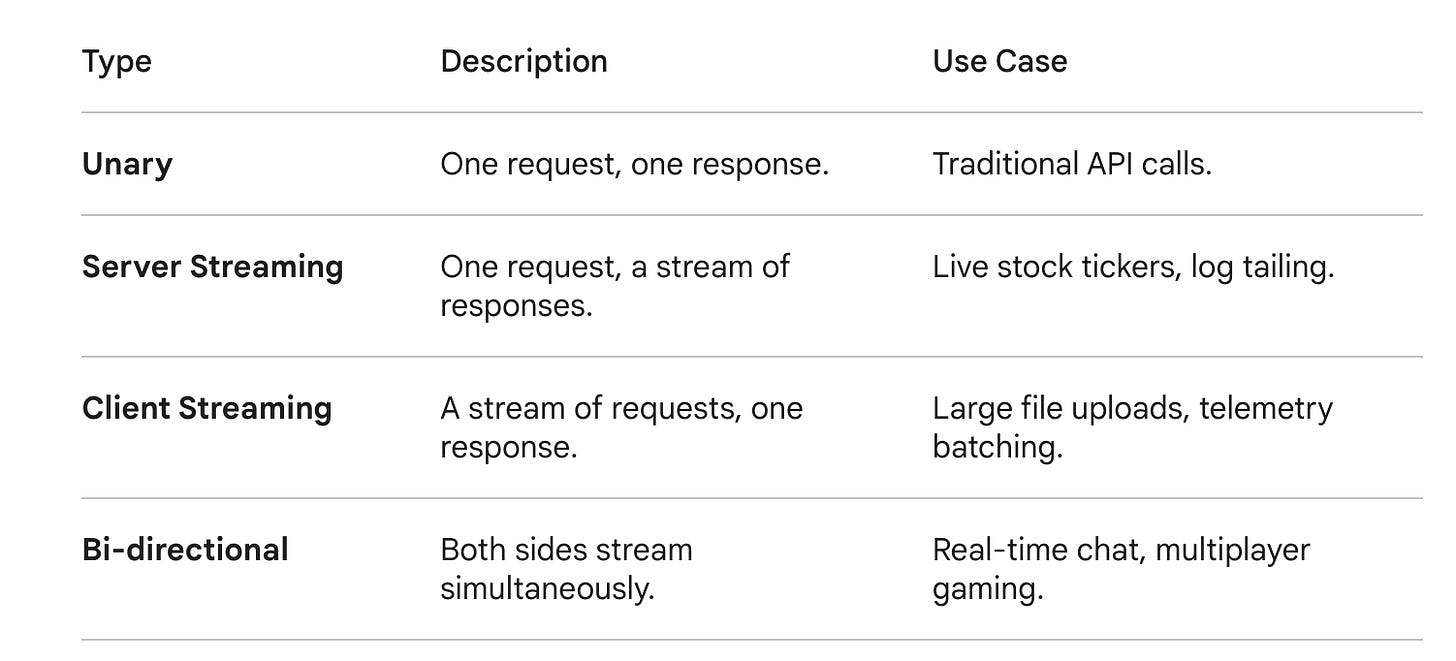

VI. The Four Modes of Streaming

gRPC breaks the Request-Response prison of traditional REST:

VII. Optimization and Architectural Maxims

L7 Load Balancing: You cannot use a standard L4 (TCP) proxy. Since gRPC multiplexes everything over one TCP connection, a L4 balancer will pin all traffic to one backend pod. You must use an L7 proxy (like Envoy) to inspect the streams and distribute them.

Absolute Deadlines over Timeouts: REST uses relative timeouts (Wait 5s). gRPC uses Absolute Deadlines (e.g. Abort at 10:30:00 UTC). This deadline propagates across the entire microservice chain. If a service halfway down the chain sees the deadline has passed, it drops the request instantly, preventing zombie processing, also helps in circuit breaking.

Rich Error Handling: Stop using

404for everything. gRPC uses a strict taxonomy of 16 codes (e.g.PERMISSION_DENIED,RESOURCE_EXHAUSTED). Use thegoogle.rpc.Statusmodel to pack strongly typed metadata into trailing headers.

The Verdict

In 2026, as AI workloads and high-frequency data become the norm, the REST tax is becoming unaffordable. While REST is for humans, gRPC is for machines.

It delivers a median latency of 25ms where REST delivers 250ms, 20–40% p99 Latency reduction. 2–3x throughput improvement all while consuming 40% less CPU.

We don't choose gRPC because it’s a trend, we choose it because it respects the hardware. It’s leaner, faster, and built to survive the storm..

Appreciate you taking the time to walk through the architecture with me.